A standalone proposal for the reform of media and communications regulation in New Zealand

Preliminary Note

This article contains a standalone proposal for the reform of media and communications regulation in New Zealand. It sets out both the theoretical and analytical foundations for reform and a concrete institutional model for a unified regulator.

The immediate driver for its development is the decision of the Broadcasting Standards Authority asserting jurisdiction over The Platform – an internet content delivery system that resembles traditional radio.

However, the genesis for the proposal is far deeper than that. It reflects developing ideas that I have had since the Law Commission’s 2013 paper on new media and news media regulation.

Since then there have been a number of initiatives directed towards regulation of Internet content. I have detailed these efforts in earlier articles on this Substack.

The decision of the BSA regarding the Platform has once again raised the issue of the relevance of the BSA in the Digital Paradigm but if the BSA is to go there must be some form of replacement.

There are a number of models available. That proposed by the Safer Online Services and Web Platforms paper issued by the Department of Internal Affairs was a heavy-handed and invasive model. Similar models are present in the Australian Online Safety Act and the UK Online Safety Act, both of which are invasive.

What I propose in this article is a light handed and less invasive model that advocates voluntary compliance which attracts a number of advantages and legal protections.

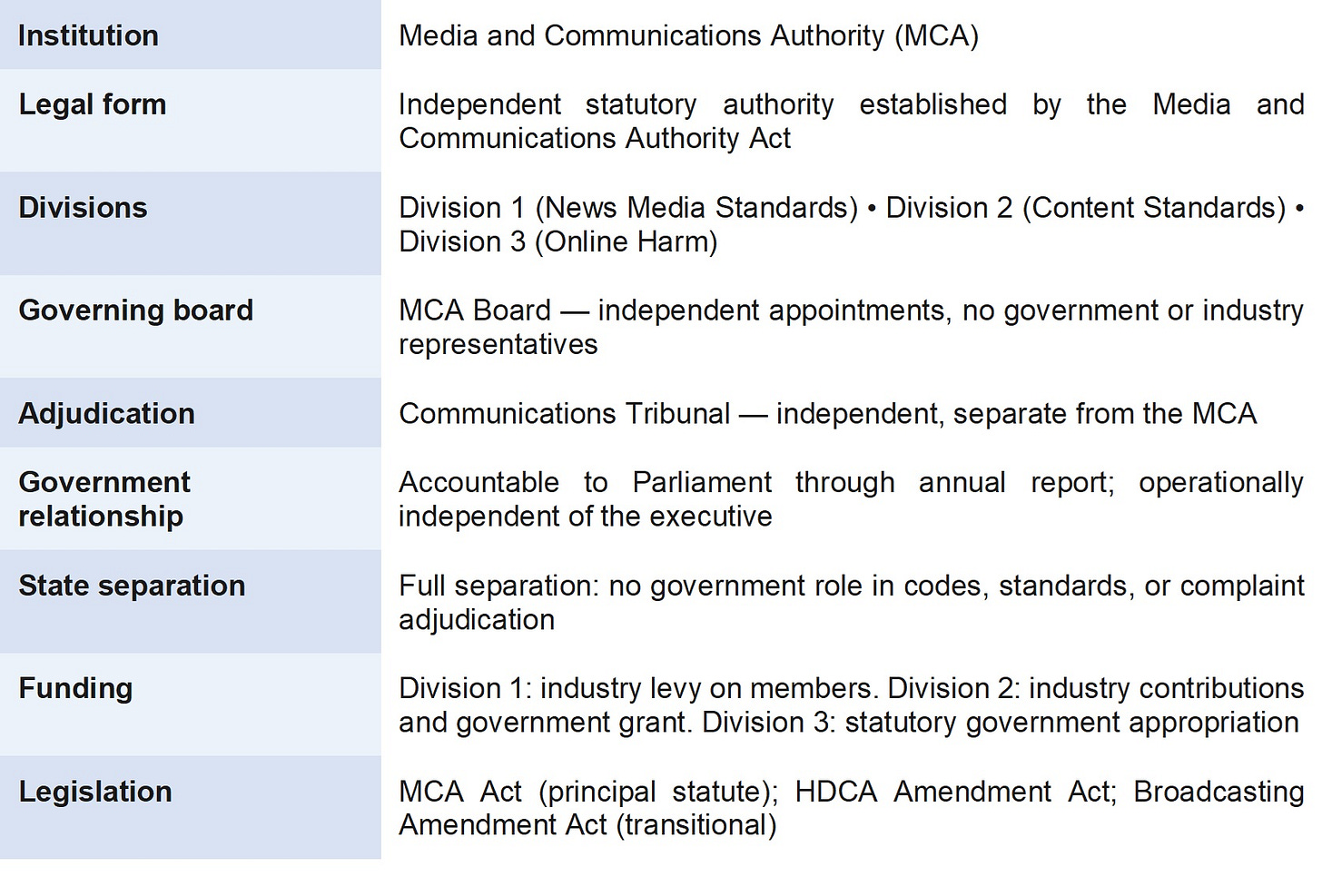

The analysis begins with an examination of the existing legislative framework, in particular the Harmful Digital Communications Act 2015, and considers its adequacy as a basis for online content regulation. It proceeds to a discussion of the nature of harm and the definitional requirements for a workable harm standard, before surveying the principal regulatory models available internationally. The document then develops a proposal for a hybrid regulatory model and describes its institutional expression in the form of a unified Media and Communications Authority (MCA) comprising three operational divisions.

The proposal draws on a substantial body of comparative regulatory experience, including the Australian Online Safety Act 2021, the United Kingdom Online Safety Act 2023, the European Union Digital Services Act, and relevant New Zealand regulatory proposals including the Law Commission’s 2013 Report 128 (A News Media Council for New Zealand), the Law Commission’s Ministerial Briefing Paper MB3 (Harmful Digital Communications), the Department of Internal Affairs’ Safer Online Services and Web Platforms proposals, the Ministry for Culture and Heritage’s 2025 consultation on media regulation, and the Education and Workforce Committee’s March 2026 Inquiry into the Harm Young New Zealanders Encounter Online.

What is proposed is not the censorship of online content, nor is it a proposal to extend state control over the press or broadcasting. Its foundational commitment is to freedom of expression as the default condition of the New Zealand communications environment. Regulatory intervention is justified only where demonstrable harm, properly defined, warrants it.

Part 1: The Case for Reform

1.1 The Current Regulatory Landscape

The historical progress towards some form of regulation of online content has increased in intensity over the last five years. The Department of Internal Affairs produced the Safer Online Services and Web Platforms discussion papers. The Ministry for Culture and Heritage released its Media Review proposals in February 2025. Most recently, the Education and Workforce Select Committee published its Report on Online Harms in March 2026.

These reports, accompanied by a groundswell of public concern at the perceived harms caused by internet platforms and the lack of adequate governmental response, mean that consideration must be given to the basis for regulation of online content and the shape of possible regulatory structures.

The rationale for further regulation of online content is based on a perceived inadequacy of existing measures and the suggestion of regulatory gaps. The scope of those inadequacies and regulatory gaps has not always been satisfactorily articulated. In particular, there is a tendency to reach for the concept of safety as the organising principle of regulation. Safety is a somewhat elusive concept upon which to base a regulatory structure. The more productive organising concept is that of identifiable online harms, which permits a more disciplined and principled approach to the scope of regulatory intervention.

New Zealand’s current regulatory architecture for media and communications consists of several distinct regimes operating in parallel, each with its own legislative basis, institutional structure, and scope of coverage. The principal components are:

However, the genesis for the proposal is far deeper than that. It reflects developing ideas that I have had since the Law Commission’s 2013 paper on new media and news media regulation.

Since then there have been a number of initiatives directed towards regulation of Internet content. I have detailed these efforts in earlier articles on this Substack.

The decision of the BSA regarding the Platform has once again raised the issue of the relevance of the BSA in the Digital Paradigm but if the BSA is to go there must be some form of replacement.

There are a number of models available. That proposed by the Safer Online Services and Web Platforms paper issued by the Department of Internal Affairs was a heavy-handed and invasive model. Similar models are present in the Australian Online Safety Act and the UK Online Safety Act, both of which are invasive.

What I propose in this article is a light handed and less invasive model that advocates voluntary compliance which attracts a number of advantages and legal protections.

The analysis begins with an examination of the existing legislative framework, in particular the Harmful Digital Communications Act 2015, and considers its adequacy as a basis for online content regulation. It proceeds to a discussion of the nature of harm and the definitional requirements for a workable harm standard, before surveying the principal regulatory models available internationally. The document then develops a proposal for a hybrid regulatory model and describes its institutional expression in the form of a unified Media and Communications Authority (MCA) comprising three operational divisions.

The proposal draws on a substantial body of comparative regulatory experience, including the Australian Online Safety Act 2021, the United Kingdom Online Safety Act 2023, the European Union Digital Services Act, and relevant New Zealand regulatory proposals including the Law Commission’s 2013 Report 128 (A News Media Council for New Zealand), the Law Commission’s Ministerial Briefing Paper MB3 (Harmful Digital Communications), the Department of Internal Affairs’ Safer Online Services and Web Platforms proposals, the Ministry for Culture and Heritage’s 2025 consultation on media regulation, and the Education and Workforce Committee’s March 2026 Inquiry into the Harm Young New Zealanders Encounter Online.

What is proposed is not the censorship of online content, nor is it a proposal to extend state control over the press or broadcasting. Its foundational commitment is to freedom of expression as the default condition of the New Zealand communications environment. Regulatory intervention is justified only where demonstrable harm, properly defined, warrants it.

Part 1: The Case for Reform

1.1 The Current Regulatory Landscape

The historical progress towards some form of regulation of online content has increased in intensity over the last five years. The Department of Internal Affairs produced the Safer Online Services and Web Platforms discussion papers. The Ministry for Culture and Heritage released its Media Review proposals in February 2025. Most recently, the Education and Workforce Select Committee published its Report on Online Harms in March 2026.

These reports, accompanied by a groundswell of public concern at the perceived harms caused by internet platforms and the lack of adequate governmental response, mean that consideration must be given to the basis for regulation of online content and the shape of possible regulatory structures.

The rationale for further regulation of online content is based on a perceived inadequacy of existing measures and the suggestion of regulatory gaps. The scope of those inadequacies and regulatory gaps has not always been satisfactorily articulated. In particular, there is a tendency to reach for the concept of safety as the organising principle of regulation. Safety is a somewhat elusive concept upon which to base a regulatory structure. The more productive organising concept is that of identifiable online harms, which permits a more disciplined and principled approach to the scope of regulatory intervention.

New Zealand’s current regulatory architecture for media and communications consists of several distinct regimes operating in parallel, each with its own legislative basis, institutional structure, and scope of coverage. The principal components are:

• The Harmful Digital Communications Act 2015 (HDCA) — a reactive, complaints-based mechanism for individual harmful digital communications, administered by Netsafe as the approved agency and the District Court.

• The Broadcasting Standards Authority (BSA) — a quasi-judicial body exercising jurisdiction over broadcasting standards under the Broadcasting Act 1989, limited to linear broadcast services and expressly excluding on-demand content.

• The New Zealand Media Council (NZMC) — a voluntary self-regulatory body for news publishers, with a complaints adjudication function but no statutory powers.

• The Office of Film and Literature Classification — administering the prior restraint classification regime under the Films, Videos and Publications Classification Act 1993.

Each of these bodies was designed for a specific communications context, and each carries structural limitations when applied to the contemporary digital environment. The BSA’s jurisdiction expressly excludes on-demand content, which now constitutes the majority of audiovisual consumption. The NZMC operates on a voluntary basis with no enforcement powers. The HDCA was designed for interpersonal harmful communications, not for systemic platform regulation.

The result is a fragmented, incomplete, and increasingly inadequate regulatory landscape. Content that causes harm through online platforms falls between the jurisdictional stools of existing bodies. The growing convergence of news media, professional content, and platform distribution has created overlaps, gaps, and inconsistencies that the current architecture cannot manage.

This proposal addresses that landscape through a unified framework: a single converged regulatory authority with three operational divisions, each serving a distinct segment of the communications environment.

1.2 The Inadequacy of the HDCA as a Comprehensive Framework

Because the HDCA is the most relevant existing instrument for online harm regulation, it is worth examining in some detail before proceeding to broader considerations of regulatory design. That examination reveals both genuine strengths and significant structural limitations that confirm the need for the more comprehensive framework proposed in this document.

The HDCA’s Harm Framework

The Harmful Digital Communications Act 2015 defines “harm” exhaustively as “serious emotional distress”. This definition operates within two distinct regimes. In the civil enforcement regime, the District Court must be satisfied that there has been a “threatened serious breach, a serious breach, or a repeated breach” of one or more of the ten communication principles in section 6, and that the breach “has caused or is likely to cause harm to an individual”. In the criminal regime under section 22, the prosecution must establish three cumulative elements: (a) the person posted a digital communication with the intention of causing harm; (b) posting the communication would cause harm to an ordinary reasonable person in the position of the victim; and (c) posting the communication actually caused harm to the victim.

The “ordinary reasonable person in the position of the victim” test in section 22(1)(b) is a mixed objective-subjective standard. It anchors the inquiry not in the sensibilities of the general population but in the position of the particular victim, while still requiring that a “reasonable” person in that position would be harmed. Section 22(2) provides a non-exhaustive list of factors: the extremity of the language, the age and characteristics of the victim, anonymity, repetition, extent of circulation, truth or falsity, and context.

Strengths of the HDCA Approach

Freedom of expression as the default. The HDCA’s harm threshold reflects a foundational commitment of liberal constitutionalism: expression is free by default, and the burden of demonstrating harm falls on those seeking restriction. The Act does not establish a censor, does not require pre-publication approval, and does not empower a government agency to patrol the internet and remove content proactively. It is a reactive instrument, responding to harm that has been demonstrated or concretely threatened. This architecture is consistent with section 14 of the New Zealand Bill of Rights Act 1990 and reflects the position articulated by the Law Commission in its 2012 Ministerial Briefing Paper that “the mere existence of harmful speech is not sufficient to justify additional regulation”.

Evidentiary discipline. The harm threshold imposes evidentiary discipline on regulatory intervention. A complaint must point to a specific communication, identify the principle it breaches, and demonstrate that the complainant has suffered or is likely to suffer harm. This prevents the regulatory machinery from being activated by anticipatory anxiety about what someone might say, or by generalised offence at the existence of particular viewpoints. It ensures that judicial power is engaged only by real harm in the world — a feature that distinguishes the HDCA from prior restraint models that inherently risk chilling lawful expression.

Transparency and accountability. When a court makes an order under the HDCA, the reasons are publicly accessible. The affected party knows what communication was found harmful, what principle it breached, and on what evidentiary basis the court acted. There is a right of appeal. Legal standards are developed through judicial decision-making in a publicly observable manner.

Weaknesses and Limitations

The “serious emotional distress” definition. The definition focuses exclusively on how a person feels about a communication. It does not encompass reputational harm, economic harm, threats to physical safety, or the broader category of dignitary harm. As Gavin Ellis has argued, there is a danger of conflating “that which harms” with “that which offends” — the definition’s subjectivity creates an unstable boundary between legitimate legal intervention and mere offence-taking.

Conversely, the “serious” qualifier may set the threshold too high for many genuine harms. In the foundational case of Police v B (Iyer), the District Court held that evidence the complainant was “very depressed”, “almost crying”, and needed someone with her for support was insufficient to meet the threshold of serious emotional distress — this in a case involving the posting of intimate photographs by an estranged spouse in the context of a protection order. The High Court on appeal found this assessment erroneous. Downs J articulated four indicia — intensity, duration, manifestation, and context — but acknowledged that proof remains “part fact, part value judgment”. The Law Commission itself used the term “substantial emotional distress” in its recommendations; the legislature adopted “serious emotional distress”, a threshold that creates evidentiary difficulties and relies substantially on self-reporting.

“Ordinary reasonable person in the position of the victim”: a potentially unstable standard. Ellis has identified a fundamental conceptual tension in the section 22(1)(b) test. Traditional tests for assessing the impact of communications deliberately invoke the generality of social values. The HDCA test departs from this by anchoring the inquiry in the “position of the victim”, which potentially replaces that deliberate generality with a narrow set of specifics defined by the victim’s belief systems, attitudes, and sensitivities. The “ordinary reasonable person” qualifier may constrain this somewhat, but the direction of travel is toward a more particularised and potentially more sensitive standard.

Structural inadequacy for platform-mediated harm. The Act was never designed to address platform-complicity in harm, systemic risks to democratic participation, or the cumulative societal effects of online abuse. It was enacted in 2015, before the full implications of algorithmic amplification, platform design, anonymous volumetric harassment, and AI-generated content had become clear. As a regulatory system for dealing with online content broadly, it is structurally inadequate. It cannot address the harms that arise from algorithmic amplification, recommender systems, dark patterns, and platform architecture rather than from any individual item of content. It places the full burden of enforcement on individual victims. Its single harm standard is too undifferentiated for the variety of online harms now recognised.

The direction of travel internationally — toward platform duties, systemic risk assessment, differentiated content categories, and empowered regulators — reflects a recognition that individual harm-plus-complaint models, while necessary, are not sufficient. New Zealand’s current reform landscape signals an awareness that the HDCA alone cannot bear the regulatory weight that the online environment now demands. The HDCA remains a necessary component of any future regulatory architecture, but it is not, and cannot be, a sufficient one.

1.3 Defining Harm

If harm is to be the basis for regulatory intervention in online communications, the concept must be carefully defined. The definition must delineate the scope of regulatory intervention (and therefore the scope of platform duties), and it must provide the conceptual foundation for the proportionality analysis required by freedom of expression guarantees. An overly broad definition risks chilling legitimate speech; an overly narrow definition fails to capture the harms that justify regulatory intervention.

A workable harm definition for the purposes of platform regulation must satisfy several requirements. First, it must be grounded in demonstrable adverse effects — not in subjective feelings, offence, or mere discomfort. Second, it must have systemic scope, encompassing not only harmful content but also harmful platform design, algorithmic amplification, and recommender system failures that create, facilitate, or amplify risks of harm at scale. Third, it must incorporate a proportionality requirement: the severity of the harm must be weighed against the restriction on expression that any regulatory response would entail. Fourth, it must expressly exclude mere offence, disagreement, discomfort, or exposure to ideas that are controversial or unpopular.

The definition must also distinguish between illegal content — content that constitutes a criminal offence under applicable law, which should be subject to mandatory removal or restriction — and legal-but-harmful content, which does not constitute a criminal offence but may cause or contribute to demonstrable harm. Legal-but-harmful content requires a different and more nuanced regulatory approach centred on risk mitigation, user empowerment, and transparency rather than removal.

HARM DEFINITION

For the purposes of platform regulation, “harm” means a demonstrable adverse effect — more than trivial and supported by empirical, clinical, or reasonably inferable evidence — on the physical safety, mental health, wellbeing, dignity, or fundamental rights of an individual or identifiable group. Mere offence, discomfort, disagreement, or exposure to controversial or unpopular ideas does not constitute harm. In the platform regulation context, harm extends to adverse effects arising from platform design, algorithmic amplification, and recommender system failures, not only from individual items of content.

This definition is purposive and constrained. It is targeted at demonstrable adverse effects rather than at subjective responses to ideas or viewpoints. It encompasses systemic harms as well as individual harms. And it is proportionality-aware: consistent with NZBORA section 5, ECHR Article 10(2), and ICCPR Article 19(3), any regulatory intervention must be justified by reference to the severity of the harm it seeks to prevent or mitigate, measured against the restriction on expression it imposes.

Part 2: A Hybrid Regulatory Framework

2.1 The Proposed Model: Hybrid Code-Compliance and Reactive-Harm

The proposed framework is a hybrid code-compliance and reactive-harm model with co-regulatory elements. It addresses aspects of systemic harms, provides a mechanism for redress of individual or group complaints about content, and does not unduly fetter information flows, commentary, discourse, and dialogue which internet platforms enable.

The framework rests on three structural elements:

• Codes of practice and conduct developed primarily by industry but subject to regulatory guidance, review, and registration — addressing systemic platform responsibilities for harmful content, including matters of algorithmic design and the use of algorithms to filter or rank otherwise legitimate content.

• A reactive-harm complaints mechanism — available for individual cases of harm, enabling affected individuals or groups to seek redress through an accessible, low-cost process extending and refining the existing HDCA framework.

• A regulatory backstop power — vested in the regulator, exercisable if industry fails to develop adequate codes, codes are deficient, or compliance is inadequate.

The framework also has an important structural characteristic that distinguishes it from more interventionist models: it extends coverage beyond online platforms to encompass news media standards and professional content standards, creating a unified institutional home for all media regulation in New Zealand. This extension is described in Part 4.

2.2 Codes of Practice: The Code-Compliance Element

In the first instance, a large degree of responsibility is vested in industry, which develops codes of practice or conduct that set standards for the prevention of online harms. The substantive content of codes is determined by industry participants or industry associations. An example of such a code may be found in the Aotearoa New Zealand Code of Practice for Online Safety and Harms, which addresses key areas including bullying, child safety, disinformation, harassment, hate speech, and misinformation.

Codes address not only content standards but also systemic issues such as algorithmic design and the use of algorithms to filter or rank otherwise legitimate content. The development of codes is cooperative: although primary responsibility is vested in industry, the regulator may issue guidance, a request, or in extremis a legislative notice specifying the scope and objectives of the codes to be developed. Industry participants — individually or through industry associations — develop draft codes through a consultative process that may include public submission rounds.

The regulator reviews the codes and registers them. A critical aspect of such review is to ensure a proper proportional balance is achieved to protect freedom of expression. Once registered, the codes become enforceable. Platforms or service providers must comply with the relevant code provisions.

A compliance safe harbour — modelled on sections 23 to 25 of the HDCA — attaches to platforms that comply with registered codes, providing protection from certain civil liability in respect of the regulated conduct.

No government department has any involvement in developing the content of codes other than the ability to make submissions as part of a public submission round. This ensures a strict separation between the State and the regulatory process. Any suggestion of state involvement, especially in any area that might involve an interference with or restriction of the freedom of expression, must be avoided, lest the integrity of the process be compromised and the regulatory authority be seen as a quasi-censorship arm of the State.

2.3 The Reactive-Harm Element

The reactive-harm element is available for individual cases of harm, enabling affected individuals or groups to seek redress through an accessible, low-cost process. The existing Communications Principles from the HDCA section 6 are maintained. Redress is sought against platforms that distribute content causing harm, or against individuals who author harmful content.

The approved agency function — currently performed by Netsafe under the HDCA — is retained as a triage mechanism, with unresolved complaints referred to the Communications Tribunal. The approved agency cannot be a government department and must operate independently of the regulatory authority. Determinations by the Tribunal are subject to appeal to the High Court.

The harm definition for the purposes of the reactive-harm element is the updated definition set out in Part 1 of this document, replacing the HDCA’s current definition of serious emotional distress with the broader and more precise formulation that encompasses physical safety, mental health, wellbeing, dignity, and fundamental rights.

2.4 Building the Framework: Institutional Requirements

Legislation is necessary to establish the regulatory authority and define its role, the process for settling codes, the definition of a responsible platform, and the enforcement and appeals pathway. The key legislative and institutional elements are:

• The regulatory authority — established by statute, appointed by Government but independent of Government in its operations and decisions.

• ‘Responsible platform’ definition — defined by legislation to capture online services enabling user-generated content, content sharing, search, or interpersonal communication, with a material New Zealand user base. Participation in code development is compulsory for responsible platforms; code compliance is voluntary but attracts safe harbour protection.

• The Communications Tribunal — an independent adjudicative body, separate from the regulatory authority, hearing and determining allegations of code non-compliance and unresolved HDCA complaints, with a right of appeal to the High Court on questions of law.

• HDCA amendment — the Harmful Digital Communications Act 2015 is retained but amended to update the harm definition, extend platform-level liability, connect the approved agency triage function to the Communications Tribunal, and confirm that the approved agency cannot be a government department.

• Existing institutional infrastructure — the BSA’s expertise in broadcasting standards, Netsafe’s experience with harmful digital communications, and the Classification Office’s role in content classification are all available to be adapted or built upon.

New Zealand’s constitutional framework — particularly NZBORA section 14 (freedom of expression) and section 5 (reasonable limits demonstrably justified in a free and democratic society) — provides the principled basis for the proportionality analysis that the hybrid model requires.

This proposal is not a proposal for regulation of mainstream media, and it is not a proposal for the replacement of the Broadcasting Standards Authority or the New Zealand Media Council as such. Rather — as Part 4 describes — it proposes a unified institutional structure that incorporates all these functions under a single authority, while preserving the distinct regulatory regimes that are appropriate for each segment of the communications environment.

Part 3 The Case for a Unified Authority

3.1 From Three Functions to One Institution

The regulatory framework described in Part 3 addresses online platform harm. But the broader challenge of media regulation in the digital age — encompassing news media standards, professional content standards, and platform harm regulation — calls for a unified institutional response.

Three distinct regulatory functions require institutional homes:

• News media standards — the fourth-estate accountability and editorial standards function currently served by the New Zealand Media Council (on a voluntary basis) and the BSA’s news and current affairs jurisdiction.

• Professional non-news content standards — the content standards function for internet-only broadcasters, on-demand audiovisual services, streaming services with a New Zealand presence, and other professional content publishers that do not meet the definition of news media.

• Platform harm regulation — the hybrid code-compliance and reactive-harm framework described in Part 3.

This proposal collapses the three distinct bodies that would otherwise be required into a single institution: the Media and Communications Authority (MCA). The Communications Tribunal is retained as an independent adjudicative body reporting to no part of the MCA. All substantive principles and rationales from the hybrid framework are preserved in full.

The unification is structural and administrative, not substantive. The three distinct regulatory regimes continue to operate with their own scopes, codes, and incentive structures. They become three operational Divisions of the one Authority rather than three separate institutions.

3.2 The Case for Unification

Several considerations support a unified authority:

• Institutional coherence. A single authority presents a coherent regulatory face to the public, to regulated entities, and to government. The boundaries between news media, professional content, and platform harms are increasingly porous — a news organisation is also a content publisher and distributes via platforms that carry harmful communications. A single authority can manage these overlaps without inter-institutional coordination failures.

• Regulatory efficiency. Three separate institutions require three governance boards, three sets of administrative infrastructure, three funding arrangements, and three public accountability frameworks. A single authority with three divisions is substantially more efficient and proportionate to New Zealand’s scale.

• Consistency of principle. The foundational principles of the framework — freedom of expression as default, voluntary membership with incentives, technology neutrality, strict separation from state content control, and harm as the threshold for intervention — apply across all three tiers. A single authority is better placed to develop and maintain consistent application of those principles.

• New Zealand scale. New Zealand’s media market is small. The regulated population across all three tiers is relatively modest compared with larger jurisdictions. Three separate regulatory institutions would impose disproportionate compliance and governance costs. The Australian, British, and EU models that operate multiple regulatory bodies do so in market contexts several orders of magnitude larger.

• Precedent. The Law Commission’s Report 128 itself recommended a single converged standards body to replace three pre-existing institutions (the Press Council, the BSA’s news functions, and OMSA). The unification proposed here extends that logic to the full scope of digital media regulation.

3.3 What Unification Does Not Mean

Unification is not amalgamation of the regulatory regimes. The three divisions operate under distinct statutory mandates, distinct codes, and distinct membership or participation frameworks. A news publisher that is a member of Division 1 is not subject to Division 2 codes. A social media platform subject to Division 3 platform duties does not become a ‘news media organisation’ by reason of operating within the same regulatory authority.

The MCA does not have a single complaints process applying to all regulated entities. Each division maintains its own complaints pathway, its own codes, and its own standards adjudication. What is shared is the governing board, the administrative infrastructure, the legal framework, the Communications Tribunal interface, and the foundational principles.

The voluntary character of Divisions 1 and 2 is fully preserved. No element of the three divisional substantive frameworks is altered by the institutional consolidation.

Unification is not amalgamation of the regulatory regimes. The three divisions operate under distinct statutory mandates, distinct codes, and distinct membership or participation frameworks. A news publisher that is a member of Division 1 is not subject to Division 2 codes. A social media platform subject to Division 3 platform duties does not become a ‘news media organisation’ by reason of operating within the same regulatory authority.

The MCA does not have a single complaints process applying to all regulated entities. Each division maintains its own complaints pathway, its own codes, and its own standards adjudication. What is shared is the governing board, the administrative infrastructure, the legal framework, the Communications Tribunal interface, and the foundational principles.

The voluntary character of Divisions 1 and 2 is fully preserved. No element of the three divisional substantive frameworks is altered by the institutional consolidation.

4.2 Governing Board

The MCA is governed by a single board of between seven and nine members. Board members are appointed by an independent appointments panel on the basis of relevant expertise. The following principles apply to board composition:

• No current employee, officer, director, or shareholder of a regulated entity may serve on the board.

• No current or recent government official or political appointee may serve on the board.

• Board members must collectively have expertise spanning: law and constitutional rights; journalism and editorial standards; digital technology and platform operations; consumer protection.

• Board members serve fixed terms and are removable only for specified cause.

The board sets overall policy, appoints divisional leadership, approves the annual report, and is responsible for the MCA’s financial management and accountability. The board does not adjudicate individual complaints; that function belongs to each division’s standards panel and, on escalation, to the Communications Tribunal.

4.3 Divisional Structure

Each division is operationally autonomous within the MCA. Each division has:

• A Divisional Director appointed by the MCA Board.

• A Divisional Standards Panel — a standing body of appropriately qualified persons who adjudicate complaints within that division.

• Its own codes of practice, developed under the process prescribed for that division.

• Its own membership or participation register.

• Its own complaints and enforcement pathway.

The Divisional Director reports to the MCA Board. The Divisional Standards Panel is operationally independent of the Board in its adjudicative functions and is not subject to direction on individual complaints.

4.4 Shared Functions

The following functions are shared across all three divisions and managed centrally by the MCA:

Legal and constitutional compliance. The MCA’s legal team reviews all codes across all three divisions for consistency with section 14 of the New Zealand Bill of Rights Act 1990 before registration.

• Research and monitoring. The MCA conducts cross-divisional research on media trends, harm patterns, and the effectiveness of the regulatory framework.

• Public engagement. A single public-facing communications function, providing information about the MCA’s three divisions and how to make complaints.

• Tribunal referrals. All three divisions use the same Communications Tribunal as their enforcement and escalation backstop.

• Annual reporting. A single annual report to Parliament covering all three divisions.

4.5 Independence and Separation from the State

The MCA is constitutionally and operationally independent of the executive government. The legislation establishing the MCA must contain explicit provisions preventing any minister or government department from giving directions to the MCA about the content of codes, the outcome of complaints, or the exercise of any regulatory discretion. This prohibition extends to all three divisions.

The elements of Division 3 (platform duties) require a statutory basis. That statutory basis creates regulatory obligations but does not give the government direction over how those obligations are fulfilled. The content of the codes that give effect to Division 3 obligations is determined by industry and registered by the MCA, not prescribed by government.

This structural separation is the most important feature of the entire framework. The experience of jurisdictions where government has acquired influence over media regulation — including through indirect means such as funding conditions or appointments processes — demonstrates the ease with which that influence can be used to chill expression and compromise the integrity of regulatory bodies.

The elements of Division 3 (platform duties) require a statutory basis. That statutory basis creates regulatory obligations but does not give the government direction over how those obligations are fulfilled. The content of the codes that give effect to Division 3 obligations is determined by industry and registered by the MCA, not prescribed by government.

This structural separation is the most important feature of the entire framework. The experience of jurisdictions where government has acquired influence over media regulation — including through indirect means such as funding conditions or appointments processes — demonstrates the ease with which that influence can be used to chill expression and compromise the integrity of regulatory bodies.

Part 5: The Three Divisions

DIVISION 1 — News Media Standards

5.1 Scope and Function

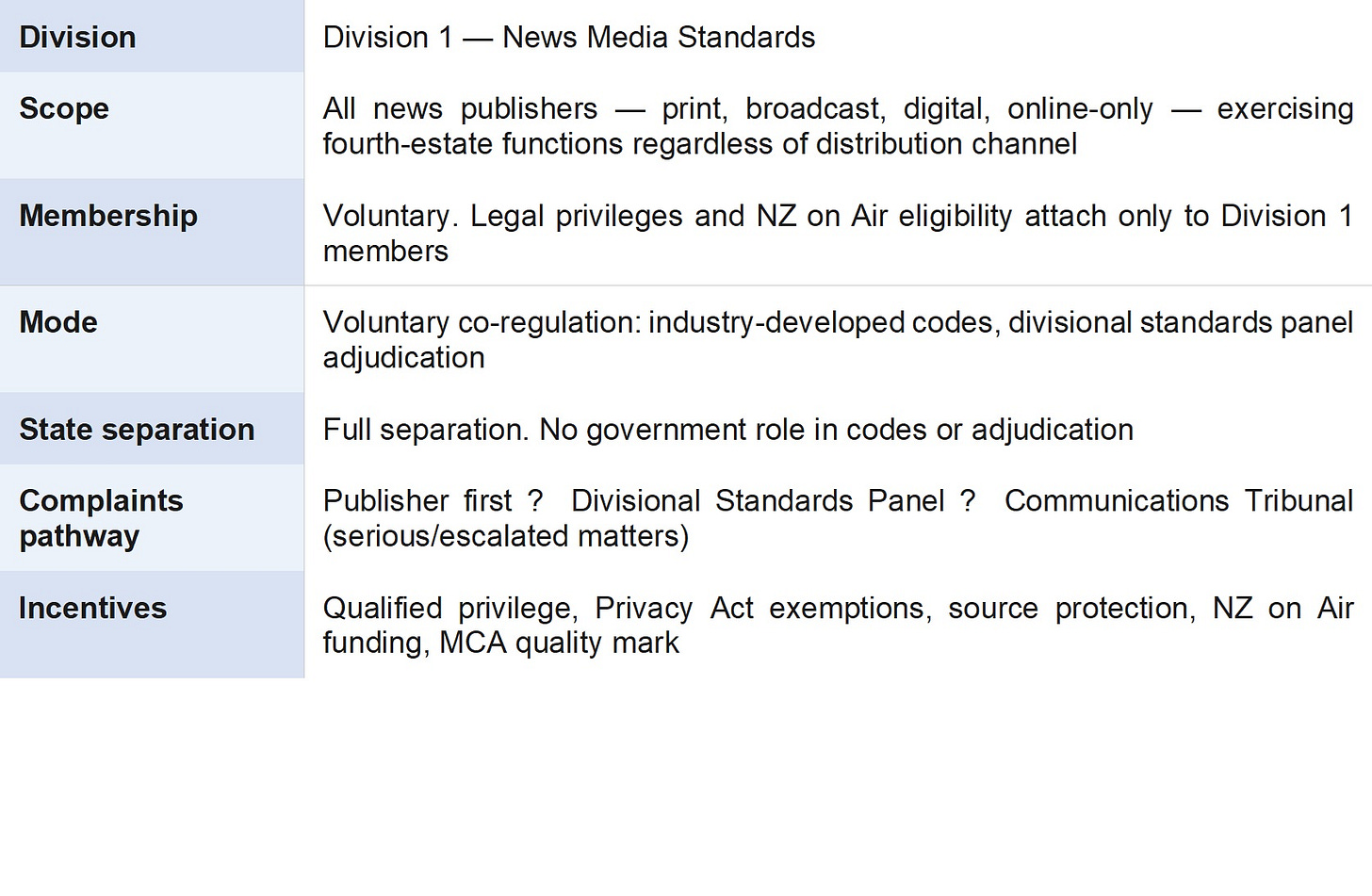

Division 1 is the successor to the New Zealand Media Council and assumes the news and current affairs functions of the Broadcasting Standards Authority. It is the operational unit of the MCA responsible for the standards, accountability, and complaint adjudication of news media organisations.

5.2 Statutory Definition of News Media

NEWS MEDIA DEFINITION

A ‘news media organisation’ is a body corporate or other publishing entity that: (a) devotes a significant element of its publishing activities to the generation, aggregation, or dissemination of news, current affairs, information, or opinion of current value; (b) disseminates that content to a public audience; (c) publishes on a regular and not merely occasional basis; and (d) is subject to a code of ethics and to the jurisdiction of Division 1 of the MCA. The definition is technology-neutral and encompasses publishers distributing by print, broadcast, on-demand, online streaming, podcast, or any other means. Social media platforms, search engines, messaging platforms, and purely user-generated content platforms are expressly excluded.

5.3 Voluntary Membership and Incentives

Division 1 membership is entirely voluntary. The following incentives attach exclusively to Division 1 members:

Division 1 membership is entirely voluntary. The following incentives attach exclusively to Division 1 members:

• Qualified privilege in defamation proceedings; exemptions under the Privacy Act 2020; protection of journalist source confidentiality.

• Eligibility for New Zealand on Air support for news, current affairs, and factual programming.

• The MCA quality mark: a public signal of editorial accountability and adherence to professional standards.

• Safe harbour: Division 1 members in compliance with their registered codes receive a safe harbour from certain civil liability attaching to publication.

These incentives are designed so that any serious news publisher operating in New Zealand will find it strongly in its interest to join Division 1. The voluntary model avoids the constitutional difficulties that would arise from compulsory regulation of the press, while the incentive structure ensures that the division will have meaningful coverage of the news media landscape.

5.4 Relationship with the BSA

Division 1 assumes the BSA’s jurisdiction over news and current affairs content across all platforms, including linear broadcast. The BSA retains jurisdiction over entertainment content on linear broadcast platforms during the transitional period. Standards of good taste and decency and protection of children in broadcast news and current affairs remain subject to concurrent consideration by Division 1 and the BSA under a memorandum of understanding, until the BSA’s transitional role concludes.

DIVISION 2 — Content Standards

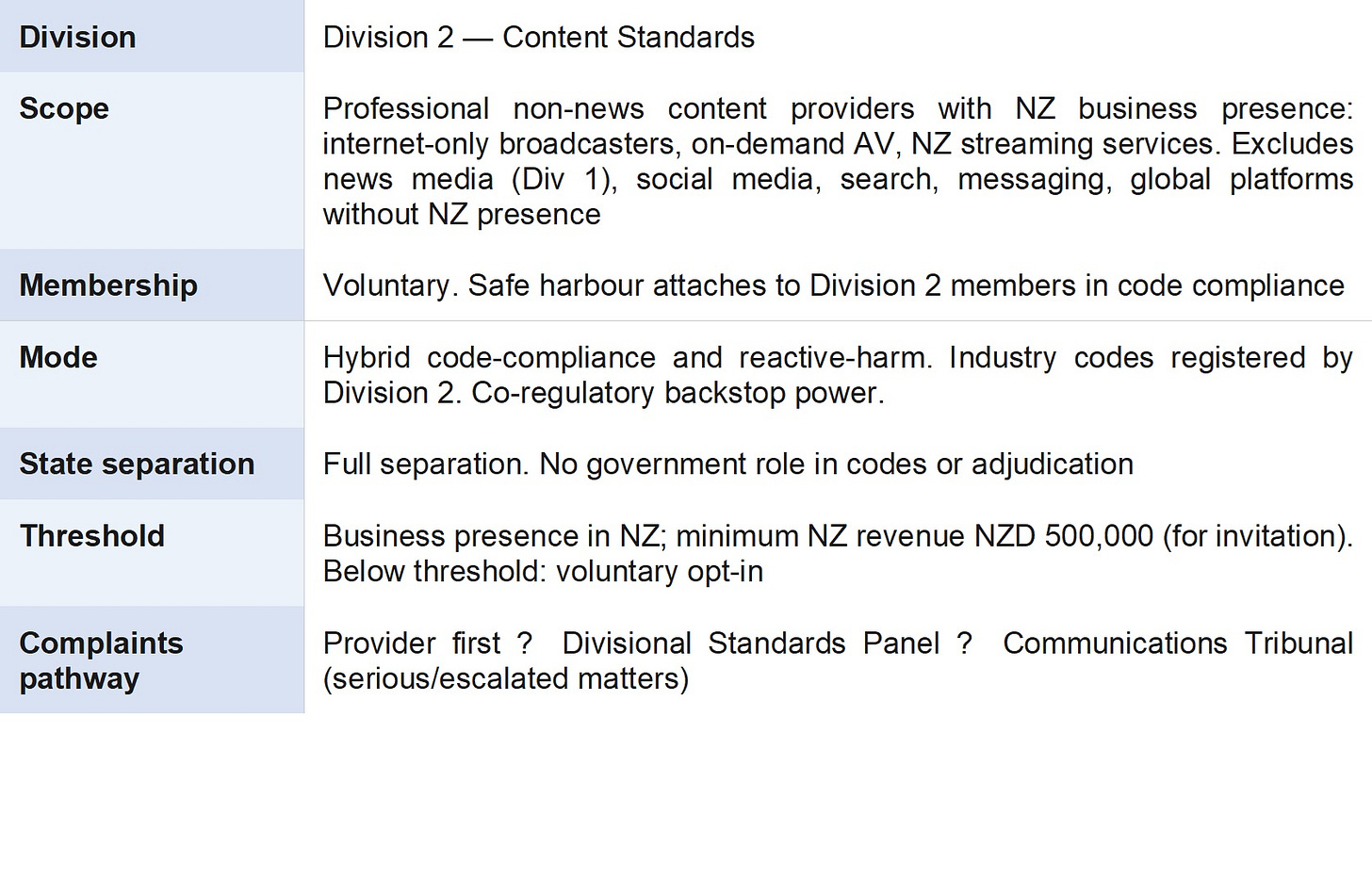

5.5 Scope and Function

Division 2 is the operational unit of the MCA responsible for the content standards of professional non-news content providers. It addresses an important regulatory gap: professional content delivery by internet-only broadcasters, on-demand audiovisual services, streaming services with a New Zealand presence, and other professional content publishers that do not meet the definition of news media.

Division 2 is the operational unit of the MCA responsible for the content standards of professional non-news content providers. It addresses an important regulatory gap: professional content delivery by internet-only broadcasters, on-demand audiovisual services, streaming services with a New Zealand presence, and other professional content publishers that do not meet the definition of news media.

5.6 Codes of Practice

Industry associations or individual providers develop draft codes of practice through a consultative process. Division 2 reviews and registers those codes. The Division 2 Divisional Director may issue guidance on the scope and objectives of codes but does not prescribe their content. No government department participates in code development other than by making submissions in a public consultation round.

The Division 2 backstop power — to issue mandatory content standards if industry fails to develop adequate codes — is a last-resort measure subject to full freedom of expression proportionality analysis. It is exercised by the Divisional Director with approval of the MCA Board, not by the government.

5.7 Global Platforms

Global streaming platforms (Netflix, Amazon Prime, Disney+, Apple TV and equivalents) without a New Zealand business presence are excluded from Division 2. Jurisdictional complexity, enforcement difficulties, and the risk of market withdrawal justify this exclusion. Jurisdiction over such platforms, to the extent it exists at all, is best pursued through international regulatory coordination rather than domestic compulsion.

DIVISION 3 — Online Harm

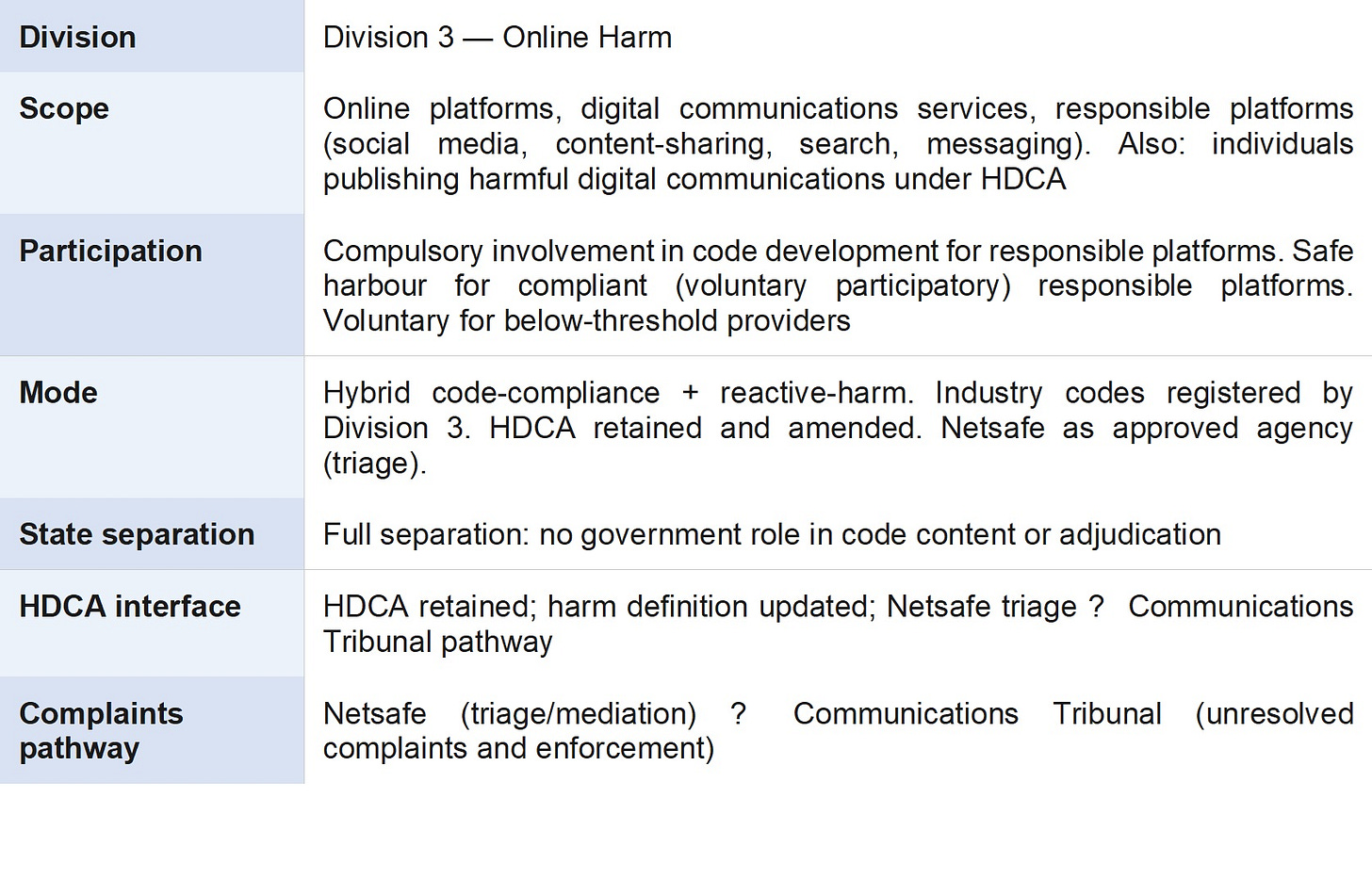

5.8 Scope and Function

Division 3 is the operational unit of the MCA responsible for platform-level harm regulation. It combines the functions of the platform harm regulator with the governance and administrative infrastructure of the MCA. The substantive model — hybrid code-compliance and reactive-harm, incorporating and extending the Harmful Digital Communications Act 2015 — is as described in Part 3.

5.9 The ‘Responsible Platform’ Definition

RESPONSIBLE PLATFORM DEFINITION

A ‘responsible platform’ is any person or entity that: (a) operates an online service that enables user-generated content, content sharing, search, or interpersonal communication; (b) makes that service available to users in New Zealand; and (c) has a material New Zealand user base (to be defined in regulations by reference to user numbers or New Zealand revenue). The definition includes social media platforms, content-sharing services, search engines, messaging services (to the extent not end-to-end encrypted), and online marketplaces. It excludes individual users, small personal websites, and bodies covered by Divisions 1 or 2 in respect of their Division 1 or Division 2 activities.

5.10 The Updated Harm Definition

The harm definition set out in Part 1 is the operative definition for Division 3 and for the amended HDCA:

HARM DEFINITION

‘Harm’ means a demonstrable adverse effect — more than trivial and supported by empirical, clinical, or reasonably inferable evidence — on the physical safety, mental health, wellbeing, dignity, or fundamental rights of an individual or identifiable group. Mere offence, discomfort, disagreement, or exposure to controversial or unpopular ideas does not constitute harm. In the platform regulation context, harm extends to adverse effects arising from platform design, algorithmic amplification, and recommender system failures, not only from individual items of content.

5.11 Code-Compliance Element

Responsible platforms are required to participate in the development of, and to comply with, industry codes of practice registered by Division 3. No government department participates in determining the content of codes other than by making public submissions.

Code compliance is voluntary, and compliant platforms receive a safe harbour modelled on sections 23 to 25 of the HDCA. The backstop power to impose mandatory standards is reserved for genuine industry failure and is exercised by the Division 3 Divisional Director with MCA Board approval.

Code compliance is voluntary, and compliant platforms receive a safe harbour modelled on sections 23 to 25 of the HDCA. The backstop power to impose mandatory standards is reserved for genuine industry failure and is exercised by the Division 3 Divisional Director with MCA Board approval.

5.12 Reactive-Harm Element: HDCA Interface

The Harmful Digital Communications Act 2015 is retained and amended as follows:

• The harm definition is replaced with the updated definition above.

• Platform-level responsibility is expressly addressed, extending the reactive-harm mechanism beyond individual communicators.

• Netsafe is retained as the approved agency, performing triage and mediation. The legislation confirms that the approved agency cannot be a government department and must operate independently of Division 3.

• Unresolved HDCA complaints are referred to the Communications Tribunal.

The complaints pathway under the amended HDCA is: complainant contacts Netsafe → Netsafe attempts resolution through negotiation and mediation → if unresolved, referral to the Communications Tribunal → Tribunal determines and orders → appeal to the High Court on questions of law.

5.13 Division 3 Structural Safeguards

It is essential that the compulsory participation element in Division 3 does not contaminate the voluntary character of Divisions 1 and 2 or create the perception that news media regulation is linked to government-backed compulsion. The following structural safeguards address this:

• Each division has a separate Divisional Director and a separate Divisional Standards Panel. Cross-divisional direction is not possible at the operational level.

• The MCA Act must expressly state that membership of or participation in Division 3 does not affect the voluntary status of Division 1 or Division 2, and vice versa.

• The MCA Board does not adjudicate individual complaints in any division. Adjudication is a function of the Divisional Standards Panels and, on escalation, the Communications Tribunal.

• The MCA Board does not determine the content of codes in any division. Code development is an industry function. The Board’s role is governance and policy, not content regulation.

• Annual reports disaggregate the activities, funding, and performance of each division, maintaining public transparency about how the three functions operate.

Part 6: Shared Institutions

6.1 The Communications Tribunal

The Communications Tribunal is established by the MCA Act as a fully independent adjudicative body. It is not part of the MCA. It exercises jurisdiction conferred by the MCA Act, the amended HDCA, and any other legislation that designates it as an adjudicative body.

The Tribunal’s jurisdiction covers:

6.1 The Communications Tribunal

The Communications Tribunal is established by the MCA Act as a fully independent adjudicative body. It is not part of the MCA. It exercises jurisdiction conferred by the MCA Act, the amended HDCA, and any other legislation that designates it as an adjudicative body.

The Tribunal’s jurisdiction covers:

• Unresolved HDCA complaints referred from Netsafe (Division 3 pathway).

• Code compliance enforcement matters referred from Division 3 (platform duty breaches).

• Code compliance enforcement matters referred from Division 2 (content standard breaches).

• Serious content standards matters referred from Division 1 (news media standards breaches).

Tribunal members are appointed on the basis of relevant legal expertise and knowledge of the digital communications environment. The Tribunal publishes all its decisions. There is a right of appeal to the High Court on questions of law from any Tribunal determination.

The independence of the Tribunal from the MCA is essential. The MCA is both an industry standards body (in Divisions 1 and 2) and a quasi-regulator (in Division 3). The Tribunal must be insulated from both these functions in its adjudicative role. The concept of an independent Communications Tribunal draws on the recommendation of the Law Commission in its Harmful Digital Communications Ministerial Briefing Paper MB3.

6.2 Netsafe

Netsafe is retained as the approved agency under the amended HDCA. It performs the triage and mediation function for individual harm complaints under Division 3. Netsafe operates cooperatively with Division 3 but independently of the MCA as a whole. The legislation must make clear that Netsafe cannot be a government department and cannot be directed by the MCA in the exercise of its triage and mediation functions.

Netsafe’s resource capacity is reviewed and, if necessary, supplemented to ensure it can perform its expanded role within the Division 3 structure. The approved agency role is not a function of the MCA and must not be structured in a way that gives the MCA indirect influence over complaint triage.

6.3 The Broadcasting Standards Authority

The BSA is reformed and retained during a transitional period with jurisdiction narrowed to entertainment content on linear broadcast platforms. Its news and current affairs jurisdiction transfers to Division 1. Its jurisdiction over online and on-demand content delivered by broadcasters transfers to Division 2.

The BSA’s institutional expertise, codes, and complaint-handling experience are a material asset for both Division 1 and Division 2, and formal knowledge-transfer and cooperation arrangements are established between the BSA and the MCA at the outset. The long-term trajectory is for the BSA’s residual jurisdiction to be absorbed into Division 2 of the MCA by legislative amendment, reviewed at five-year intervals.

6.4 The Classification Office

The Office of Film and Literature Classification continues to operate under the Films, Videos and Publications Classification Act 1993. It is entirely separate from the MCA and is not affected by this framework. The prior restraint model under that Act remains appropriate for its specific purposes and is not displaced. As noted in Part 2, the prior restraint model is best deployed as one instrument in a broader regulatory toolkit, appropriate for the most serious categories of objectionable content. Its institutional expression in the Classification Office remains well-suited to that function.

Part 7: Legislative Architecture

7.1 Primary Legislation Required

The unified model requires the following legislation:

1. Media and Communications Authority Act — the principal statute, establishing the MCA, its three divisions, the governing board, the divisional structure, the Communications Tribunal, the code development processes for all three divisions, the backstop powers of Divisions 2 and 3, the complaints and enforcement pathways, the statutory definition of ‘news media’, the definition of ‘responsible platform’, and the incentive structure for Division 1 membership.

2. Harmful Digital Communications Amendment Act — amending the HDCA to update the harm definition, extend platform-level liability, and connect Netsafe’s triage function to the Communications Tribunal.

3. Broadcasting Amendment Act — transitional amendments narrowing the BSA’s jurisdiction and making provision for the transfer of the BSA’s news and current affairs and online/on-demand functions to Divisions 1 and 2 respectively.

4. Consequential Amendments Act — amending the Privacy Act 2020, the Defamation Act 1992, the Official Information Act 1982, and other relevant statutes to reflect the new ‘news media’ definition and the MCA’s status as the relevant regulatory body.

7.2 Voluntary Character Preserved

The MCA Act must state explicitly that:

The MCA Act must state explicitly that:

• Membership of Division 1 and participation in Divisions 2 and 3 are voluntary.

• The consequences of non-membership of Division 1 are the forfeiture of the incentives available to members, not legal sanction.

• The consequences of non-participation in Division 2 are the loss of safe harbour protection and the MCA quality mark, not legal sanction.

• The compulsory participation of responsible platforms in Division 3 in code development does not affect the voluntary status of Divisions 1 and 2 nor the voluntary participation of responsible platforms in Division 3 code compliance.

7.3 Freedom of Expression Safeguards

All freedom of expression safeguards apply across the MCA:

All freedom of expression safeguards apply across the MCA:

• All codes across all three divisions must be reviewed for consistency with section 14 NZBORA before registration.

• The harm definition expressly excludes mere offence and exposure to controversial ideas.

• The Communications Tribunal must conduct proportionality analysis in all enforcement decisions.

• All MCA divisional determinations and Tribunal decisions are subject to appeal to the High Court.

• No government department has any role in determining the content of codes in any division.

• The MCA’s backstop powers (Divisions 2 and 3) are exercisable only where industry self-regulation has genuinely failed, and any mandatory standards issued are subject to the same freedom of expression constraints as the codes themselves.

Part 8: Risks and Mitigations

8.1 Risks of the Unified Model

The unified model carries certain risks:

8.1 Risks of the Unified Model

The unified model carries certain risks:

• Risk: Contamination between divisions. The compulsory character of Division 3 may be perceived as compromising the voluntary character of Divisions 1 and 2, or as bringing news media within a quasi-regulatory structure. Mitigation: Explicit statutory separation of the three divisions. Clear public communication that Division 1 and Division 2 are voluntary and that no regulated entity is subject to obligations across divisions by reason of the unified institutional structure.

• Risk: Board capture. A single board responsible for all three divisions may be susceptible to regulatory capture by one or more of the regulated industries. Mitigation: Strict appointment criteria excluding any connection to regulated entities. Fixed terms. Removal only for specified cause. Transparent appointment process through an independent appointments panel.

• Risk: Governance overload. A single board may lack the bandwidth to provide effective governance across three operationally distinct regulatory regimes. Mitigation: Divisional Directors with substantial operational autonomy. Board focuses on governance, policy, and accountability rather than operational decisions.

8.2 Recommendation

A unified MCA model is preferred for New Zealand’s scale and regulatory context. The institutional efficiencies are significant, the substantive framework is identical across all three divisions, and the structural safeguards are sufficient to manage the risks identified above. The Law Commission’s own logic in Report 128 — recommending a single converged body to replace multiple pre-existing institutions — supports the extension of that principle to the full scope of media and communications regulation.

A three-body framework remains a viable alternative if the Government or the media industry considers that the institutional separation of the news media standards function from the platform harm function is essential to maintaining public confidence in the voluntariness of Division 1. In that event, the MCA model can be readily disaggregated by separating Division 1 into a standalone body while retaining Divisions 2 and 3 within the MCA structure.

A unified MCA model is preferred for New Zealand’s scale and regulatory context. The institutional efficiencies are significant, the substantive framework is identical across all three divisions, and the structural safeguards are sufficient to manage the risks identified above. The Law Commission’s own logic in Report 128 — recommending a single converged body to replace multiple pre-existing institutions — supports the extension of that principle to the full scope of media and communications regulation.

A three-body framework remains a viable alternative if the Government or the media industry considers that the institutional separation of the news media standards function from the platform harm function is essential to maintaining public confidence in the voluntariness of Division 1. In that event, the MCA model can be readily disaggregated by separating Division 1 into a standalone body while retaining Divisions 2 and 3 within the MCA structure.

Part 9: Architecture Summary

9.1 The MCA in the Institutional Landscape

The complete institutional landscape under the unified MCA model:

• Media and Communications Authority (MCA)

◦ Division 1 — News Media Standards (voluntary; news media)

◦ Division 2 — Content Standards (voluntary; professional non-news content)

◦ Division 3 — Online Harm (compulsory code development for responsible platforms; HDCA extended)

• Communications Tribunal (independent; all three divisions; HDCA)

• Netsafe (approved agency; HDCA triage; independent of MCA)

• Broadcasting Standards Authority (transitional; linear broadcast entertainment)

• New Zealand Media Council (continues; transition pathway to Division 1)

• Office of Film and Literature Classification (unaffected; separate)

9.2 What This Proposal Is Not

This proposal should be understood in light of what it does not attempt. It is not a proposal for censorship of online content or for state control over the press. Its foundational commitment throughout is to freedom of expression as the default condition of the New Zealand communications environment.

It is not an attempt to create a universal solution to every problem posed by online communications systems. Unlike the wide-ranging Safer Online Services and Web Platforms proposals of the Department of Internal Affairs, this proposal is anchored to the concept of harm. Whilst this may be seen as a limiting factor, it restricts and ring-fences the scope of interference with robust and often confronting discourse on online platforms. Any regulatory model involving such compromises will not provide an absolute solution. It will not prevent harm. Rather the solution is mitigatory, consistent with the foundational principle that freedom of expression is the default and regulatory intervention requires demonstrable justification.

The model also builds deliberately on existing institutional infrastructure rather than replacing it wholesale. The BSA’s four decades of expertise, Netsafe’s experience with harmful digital communications, the Law Commission’s carefully considered analysis in its various reports, and the voluntary commitment of news media organisations to self-regulation through the New Zealand Media Council are all material assets that the MCA framework is designed to preserve and build upon.

It is not an attempt to create a universal solution to every problem posed by online communications systems. Unlike the wide-ranging Safer Online Services and Web Platforms proposals of the Department of Internal Affairs, this proposal is anchored to the concept of harm. Whilst this may be seen as a limiting factor, it restricts and ring-fences the scope of interference with robust and often confronting discourse on online platforms. Any regulatory model involving such compromises will not provide an absolute solution. It will not prevent harm. Rather the solution is mitigatory, consistent with the foundational principle that freedom of expression is the default and regulatory intervention requires demonstrable justification.

The model also builds deliberately on existing institutional infrastructure rather than replacing it wholesale. The BSA’s four decades of expertise, Netsafe’s experience with harmful digital communications, the Law Commission’s carefully considered analysis in its various reports, and the voluntary commitment of news media organisations to self-regulation through the New Zealand Media Council are all material assets that the MCA framework is designed to preserve and build upon.

Conclusion

This proposal has addressed two related challenges. The first is the inadequacy of New Zealand’s current online harm regulatory architecture, centred on the Harmful Digital Communications Act 2015, which was designed for interpersonal harmful communications rather than systemic platform regulation. The second is the broader fragmentation of New Zealand’s media regulatory landscape, which leaves significant gaps in coverage and creates inconsistencies that the digital convergence of media and communications has made increasingly apparent.

The proposed framework responds to both challenges through a unified institutional model: a Media and Communications Authority with three operational divisions, each addressing a distinct segment of the communications environment under a consistent set of foundational principles.

The framework’s central commitments are: freedom of expression as the default condition; harm, properly defined, as the threshold for regulatory intervention; strict separation between the State and the content of codes and standards; the use of industry self-regulation as the primary mechanism, backed by a credible regulatory backstop; and an independent adjudicative function vested in the Communications Tribunal.

The proposal draws on comparative experience from Australia, the United Kingdom, and the European Union, while remaining calibrated to New Zealand’s constitutional framework, institutional capacity, and media market scale. It is not the most interventionist model available, but it is argued to be the most appropriate: the model that best balances effective harm mitigation with the protection of the freedom of expression that is the lifeblood of a functioning democratic society.

David Harvey is a former District Court Judge and Mastermind champion, as well as an award winning writer who blogs at the substack site A Halflings View - Where this article was sourced.

2 comments:

This was a serious high level analytical contribution.

I shudder at the future legal cost that may be compounded for innocent parties "having an opinion" in NZ in conjunction with the opinion of others.

I revert to some cost effective , safe and practical positions ; turn the device off , or turn sound down , don't look or listen to the device .

If these simple precautions do not ameliorate potential personal harm , breathe deep, preferably for an extended period .

This expert ( including legal) analysis is completely beyond Minister Goldsmith - and also the BSA people - whose main goal is to extend their influence and authority inside the ( corrupt) public service as per their cultural marxist convictions .

The Coalition - if serious about its image - would have reformed the media in the first month of its mandate. Did not happen - instead, 3 yeas of constant destructive denigration. Why? National holds this portfolio. Luxon removed Melisssa Lee ( a journalist) was removed and installed his henchman, Goldsmith - no action . Go figure!

Post a Comment

Thank you for joining the discussion. Breaking Views welcomes respectful contributions that enrich the debate. Please ensure your comments are not defamatory, derogatory or disruptive. We appreciate your cooperation.